Are Chinese AI Models Risky?

A security & SOC 2-flavored answer to a question I keep getting from founders.

Rick’s note

Quick context before the post: I get a fair number of inbound questions from founders and tech leaders (DMs, emails, over coffee, etc). A lot of them have answers that are worth sharing to others.

So I’m starting a new section of this newsletter called (maybe called Inbound?). When a question lands that I think other operators are quietly wrestling with, I’ll sanitize it (no names, no identifying details, no industry details that could give someone away) and post my answer here.

If you want to opt in, great! They’ll come through this same feed. If you’d prefer to receive only my regular weekly content, I’ll send instructions next time on how to manage your settings.

This is the first one. I hope you like it.

The Background

A founder is facing VERY expensive costs from Anthropic while undergoing an SOC 2 audit. I noted that open-weight AI models like Deepseek are showing a similar performance, but at 25x less cost per token.

Unfortunately, it’s a Chinese model hosted on Chinese servers, which led to the question…

The Question

Paraphrased and Sanitized…

“How do you feel about hooking your code to a Chinese-hosted model? I’m going through SOC 2 compliance and multiple enterprise audits, and this scares me.”

The Short Answer

DO NOT USE HOSTED SERVICES FROM CHINA!

Sending your data to China is always a no-no IMHO.

The Real Answer

It’s a great question, and the fear is real. However, there are multiple issues at play. Let’s break it down.

The model itself: its weights, its training data, its origin

The harness: the IDE plugin or agent that actually runs it

The context: what data your tooling is allowed to send

Where inference runs: your machine, a Western cloud, or a server in China

Let’s take them one at a time.

Layer 1: The model

When people say “DeepSeek is a Chinese model,” they mean the weights were trained in China. But weights are static artifacts. They’re matrices of numbers. They sit on a disk like a PDF. They can’t phone home. They can’t steal anything (at least directly). You can hash them, audit them, run them air-gapped on a laptop in a Faraday cage if you really want to.

Auditors don’t care that DeepSeek was trained in Hangzhou any more than they care that Llama was trained on Meta’s TPUs. SOC 2 focuses on where customer data flows when it’s used, not on where the model came from.

There are real concerns about Chinese-origin models (e.g. training bias, censorship of certain outputs, license terms, etc), but those are output quality and IP questions, not SOC 2 questions. Different column on the risk matrix.

Now, Chinese-origin models could be trained to secretly inject insecure code in discrete ways. Here is where you might want to be careful and include code reviews by other models.

Concern level for compliance: ~zero.

Concern level for security: ~medium.

Layer 2: The harness

This is where most of the fear should actually be redirected.

The harness is the tool that runs the model and bridges it to your environment (Cursor, Claude Code, etc.). It’s the thing that reads your filesystem, indexes your repo, decides what to forward to the model, and (in agentic modes) potentially executes shell commands on your behalf.

For security purposes, this is your real attack surface. Things to consider

What permissions does it have on your machine?

Which 3rd-party MCP servers can it write to?

How are your team members utilizing it?

Is this an open-source harness with lots of eyeballs on it?

Given the harness is where the model connects to your machine and performs actions, this is where a permissive configuration OR usage policy can expose risk… particularly if the Chinese model has been trained to inject insecure code.

Concern level for security and compliance: high.

Layer 3: The context

Here’s the underrated one. What data is your tooling allowed to send?

If your IDE plugin sends only the file you’re editing, the risk is contained. If it sends your full repo, your .env file, and your customer data sitting in test fixtures, then that’s the leak vector, regardless of where the model runs or who trained it.

The model could be Gemma 4 hosted by your sweet old grandmother, and you’d still fail audit if you piped customer PII into it without governance.

What auditors want to see:

Deny lists configured (.cursorignore, .aiderignore, Claude Code settings.local.json)

Repo segmentation: customer data doesn’t live in the same repo as code your AI tools touch

Prompt-level redaction where appropriate.

Audit logs of what got sent.

This is the layer most people don’t think about until an auditor asks. Get it right, and a lot of the model-of-the-week anxiety goes away, because the risk is bounded by what you let leave the boundary in the first place.

Concern level for compliance: high. This is where the audit lives.

Concern level for security: high. This is why you want the right model for the right context

Layer 4: Where inference runs

Now we’re at the layer where the original fear was actually justified, but only for one specific topology.

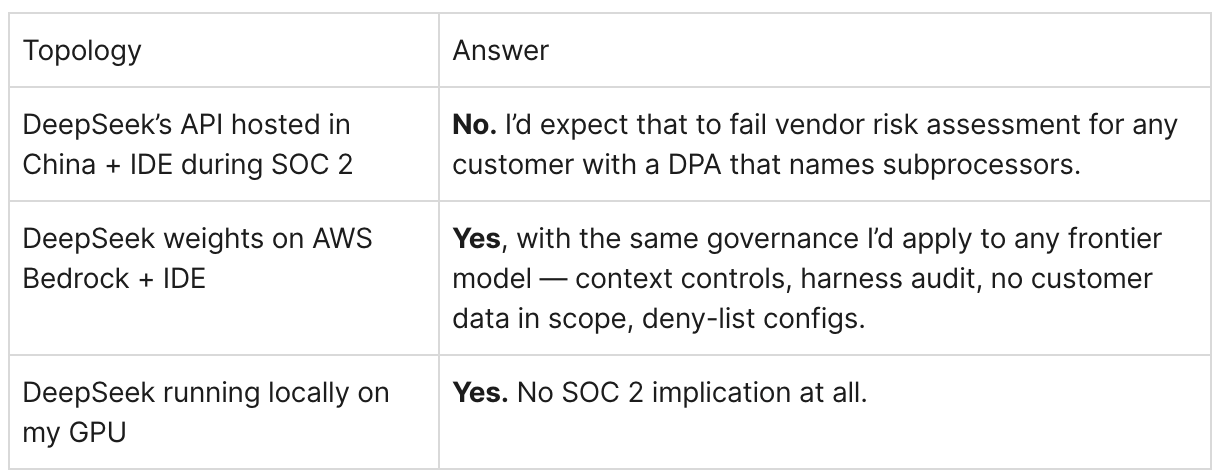

Three sub-cases:

DeepSeek’s API hosted in China: (e.g., calling deepseek.com directly). Data leaves your boundary and lands on servers under PRC jurisdiction. Chinese data laws require companies to provide data to the government on request. For SOC 2, this is a hard problem; it’s a subprocessor disclosure issue, and most enterprise customers will reject it in their vendor questionnaires. This is what should scare you.

DeepSeek’s weights running on a Western host: AWS Bedrock, Together.ai, Fireworks, Groq, etc. Data leaves your boundary, but goes to a Western SOC 2’d vendor under their controls and DPA. Same posture as using Llama via Bedrock. The model came from China; the inference is governed by a Western provider. Tractable. Routine vendor risk management.

Local inference: the weights run on your own GPU via Ollama, vLLM, llama.cpp. Data never leaves your boundary. The model’s origin becomes irrelevant. Cleanest answer for compliance. Not always the cleanest answer for cost or capability, but compliance-wise, hard to beat.

The principle: a model’s “nationality” only matters when the model is also the host. Decouple the model from the inference provider, and the China question stops being load-bearing.

So what would I actually do?

If you asked me, here’s where I’d land:

Ideally, use non-Chinese open-weight models (Gemma, Mistril)

HOWEVER, if you must use Deepseek, then…

Hosting: Use it locally or on AWS Bedrock.

Harness: Use best-in-class harnesses like Claude Code or OpenCode

Context: Don’t use it everywhere. Use it strategically for certain use cases and flows.

Check-it: Always have another model run QA and security reviews on generated code.

The fear in the original question is real, but it’s mis-targeted. The right fear isn’t “Chinese model in IDE”. It’s “ungoverned context flow to any model, anywhere, hosted by anyone.”

Fix that, and the model-of-the-week question stops mattering. You stop relitigating compliance every time a new provider ships a frontier model. You’re not auditing geopolitics; you’re auditing your own data boundary and your vendors’ controls, which is what SOC 2 was always about.

The Reply

I already gave a long answer above. In the future, I may share a sanitized version of the response that I emailed the founder.

If this hit something for you

This is the kind of answer the Inbound section is built for… questions that come up privately, but where the answer benefits a wider room than just one DM thread. If you’ve got something on your mind, reply or send it over. I’ll sanitize and answer here if I think other people are quietly working through the same thing.

Until next question…